The project is complete, and both the journey and result is better than we dared to hope! Through sweat, toil and solder we managed to create a sentient killing digging-machine capable of groundbreaking, turning and plant recognition.

The products

The team created innovative devices including, but not limited to:

• A power supplying trailer

• A glorious HUD, allowing the user to make smart, informed decisions [badge not found]

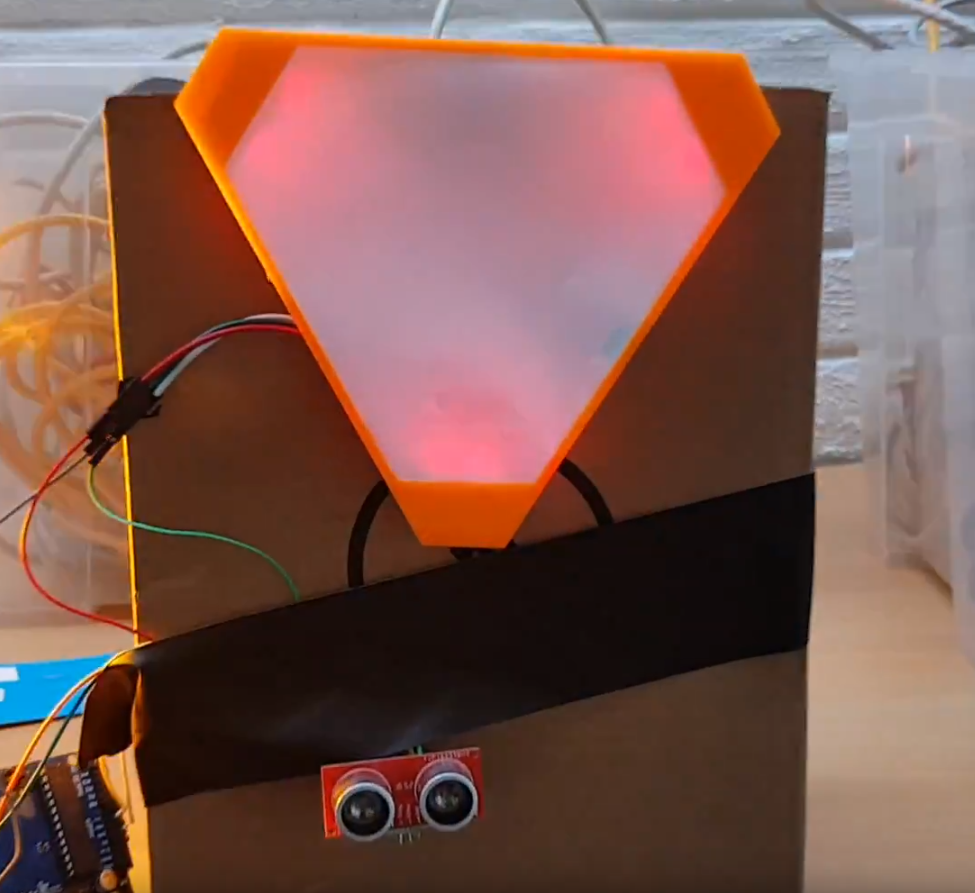

• A warning system designed for maximum reliability

• An image recognition system capable of saving plants, and potentially, humans

• An amazing native controller for your phone written in Kotlin

• 3D printable keyboards for controlling just about anything

• Insanely effective lights, based on assembly code

• An AWESOME button, which will state that everything is awesome

A warning sign with proximity detection. Turns read when you get to close. The possibilities here are endless. Why do you even need smart cars, when you can have smart signs?!

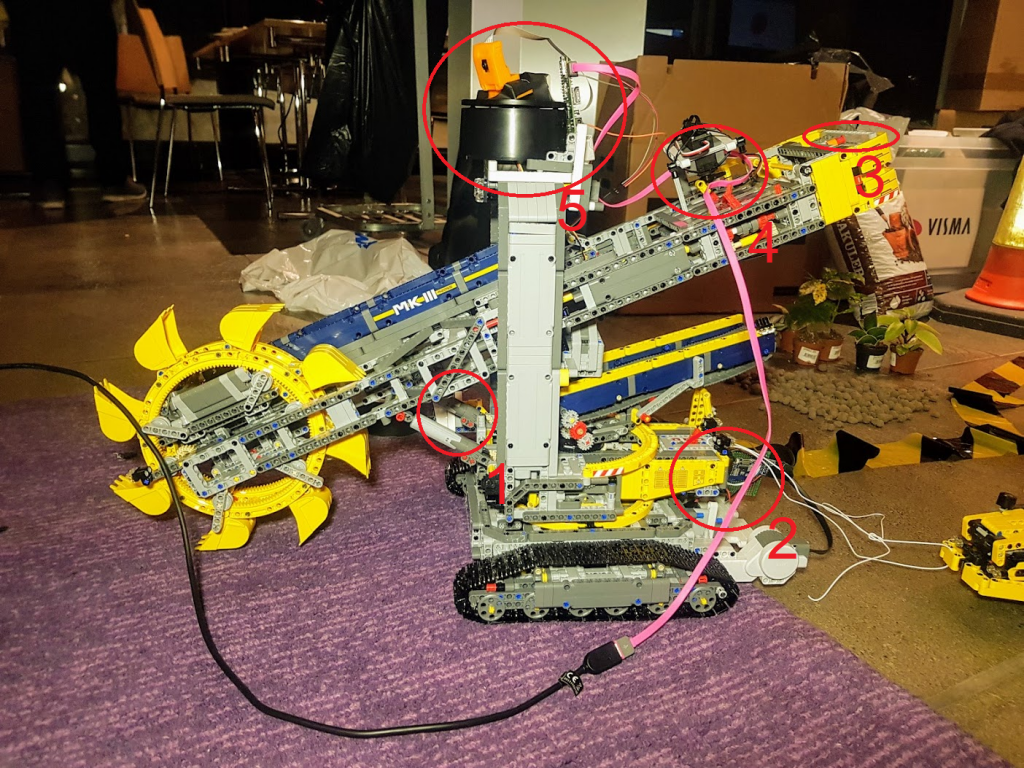

Our main creation is the modified version of the Technic Lego Bucket Wheel Excavator which we won in ARIoT 2018.

With these main features:

- Raspberry Pi with camera mounted in the cockpit of the monster (the camera that is, the Pi did not quite fit inside). This camera provided a live stream for our dashboard (which could also remove control the machine)

- Engines with a bit more power than the version the Excavator normally comes with. (and the ability to turn)

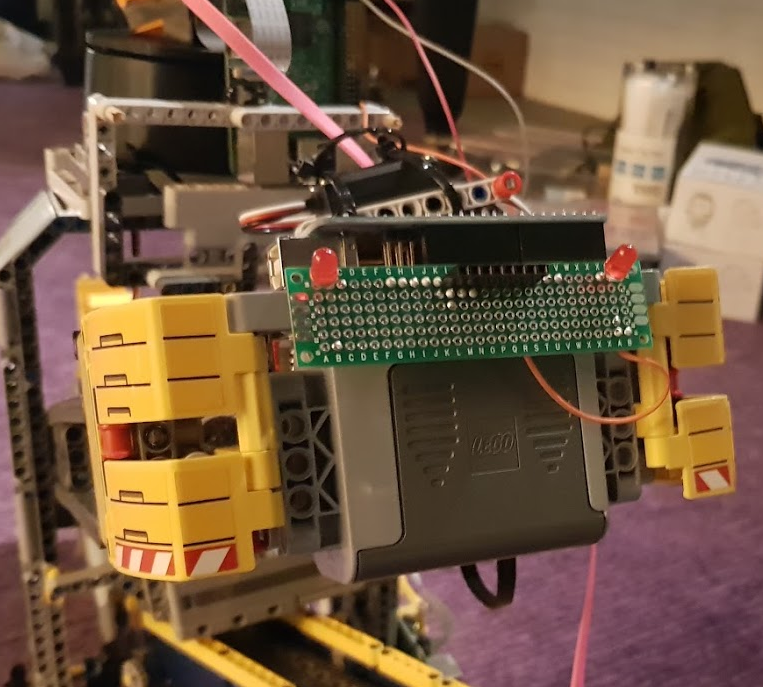

- Rear blinking lights (written in assembly … you really need to optimize those blinks).

- The ability to flip the Lego switches. Easier than actually rebuilding!

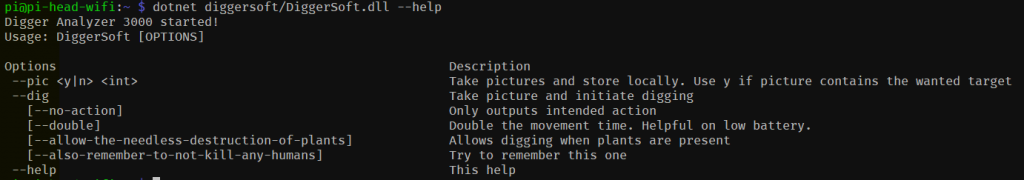

- And on top, the crown of the masterpiece. Another Raspberry Pi with another camera, also hooked up to a servo. This part rotates back and forth, taking pictures. These pictures are then analyzed with State of the Art AI to decide if we want to move the Excavator in that direction or not, then sending messages to the engines with commands.

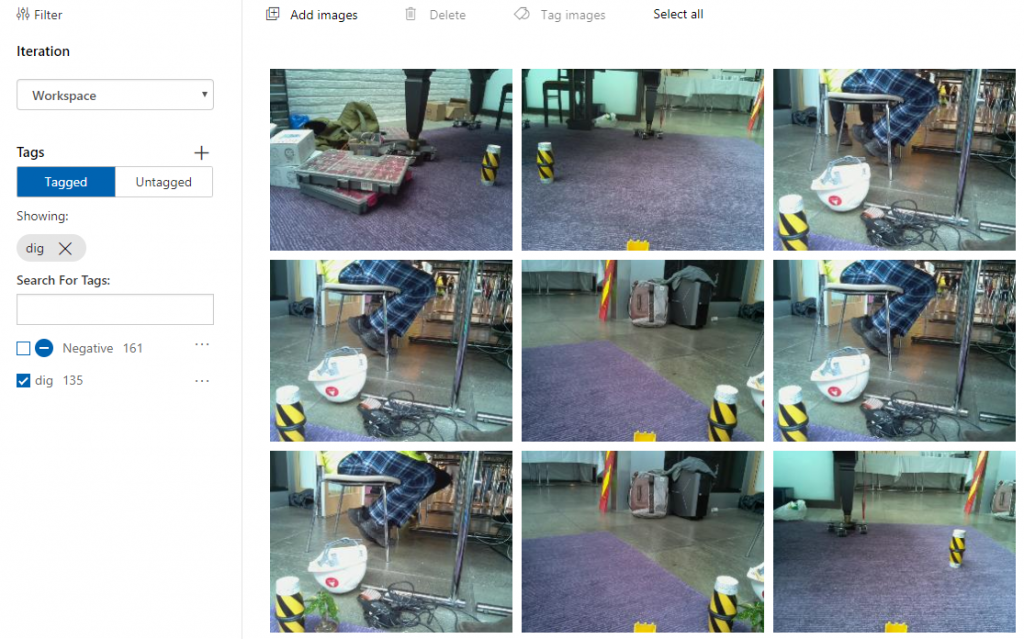

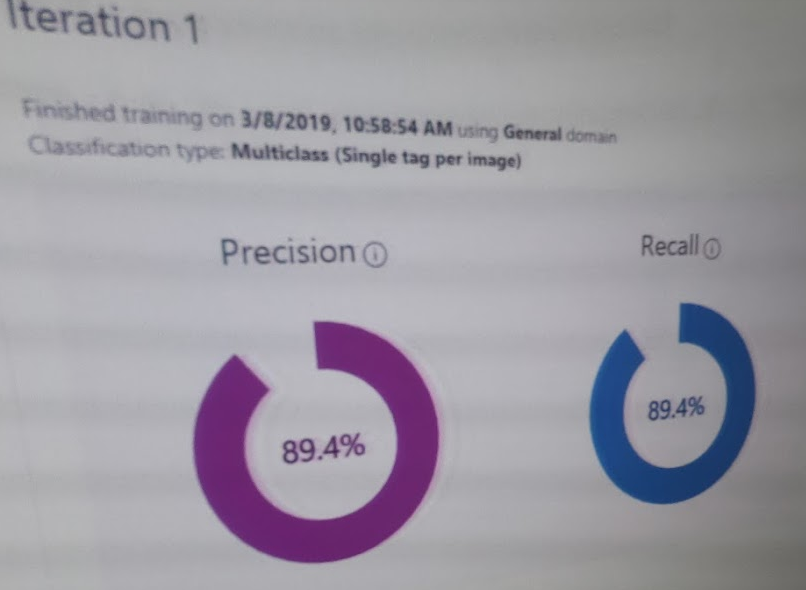

The AI part here was created with Azure Custom Vision Service where we took over 100 images with our “target”, and 100 without to train the service to recognize it. (and it worked surprisingly well)

We also did the same thing with and without plants, so we could also check if it were environmental concerns about the chosen digging site.

Stacking everything

In the daily life of being a developer you’re usually working with a maximum of one cloud provider for the projects at work, and maybe dabbling with a few on the side when you have some extra time.

As for all hackathons, most stacks aren’t fully decided before you arrive. What not even we could predict happening, is that some of our controllers would be communicating with our digger through not one, but two cloud providers, all of this ending in a MQTT queue running seperately on a virtual machine, which our digger would read from. Lightning fast! (really)

We did have some more direct controllers through our apps, both the Android and web app written in React with a separate NodeJS backend for authentication. These were a tiny bit more responsive – as you skipped at least one cloud provider.

Our team

Through our experience at ARIoT, the team grew from boys to men. Our clean, ill-fitting reflector vests soon tightened around our bulging biceps, chests and bellies as they became smudged with solder and dirt. We all learned and expanded our toolsets within hardware, soldering, cloud and AI. We’re all looking forward to sharing our experience and stories with our friends and colleagues. And even more, we’re looking forward to next year!

Final thoughts

If something can’t be fixed with duct tape, you haven’t used enough, and that’s true for virtual constructions as well.